Artificial intelligence is no longer a future investment. Businesses across Chennai from manufacturing units to fintech startups are already running AI systems that cut costs, reduce errors, and speed up decisions.

But here's where most businesses run into trouble: every AI vendor in Chennai looks identical on the surface. Same buzzwords. Same service pages. Same promises about "cutting-edge solutions." Choosing the wrong one costs months and significant budget with little to show for it.

This guide gives you a factual, structured breakdown of what separates capable AI development companies in Chennai from average ones the technologies they use, the industries they serve, the questions worth asking, and the red flags that are easy to miss when you're in the middle of vendor conversations.

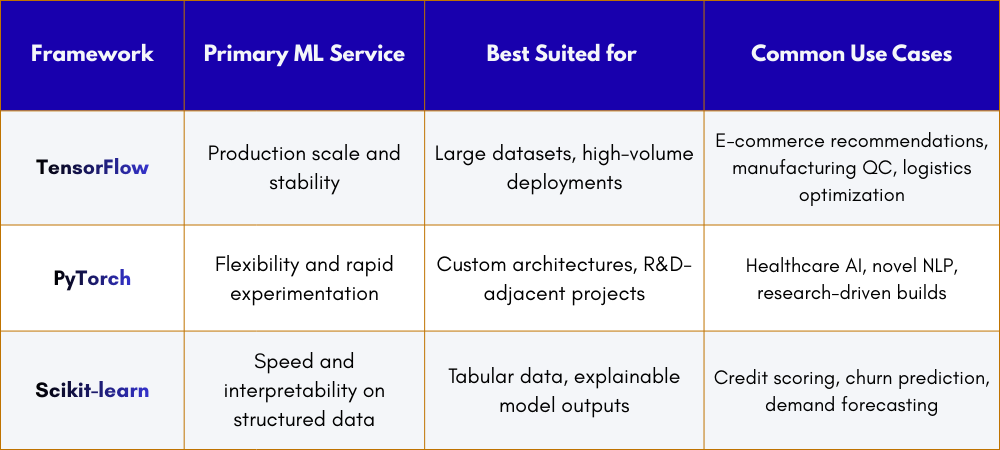

The Machine Learning Frameworks That Power Real AI Projects

Framework Comparison at a Glance

A machine learning framework is the software foundation on which AI models are built, trained, and deployed. The choice of framework determines how fast a model can be developed, how well it scales in production, and what class of problems it can handle effectively.

TensorFlow

Developed by Google, TensorFlow is the dominant choice for AI systems that need to run reliably at scale. A model can begin processing a few thousand records and scale to millions without requiring a structural rebuild. It is particularly well-suited to image recognition pipelines, recommendation engines, and anything where production stability matters more than development speed.

PyTorch

PyTorch is the preferred framework when a project involves novel architectures or requires fast iteration. Its dynamic computation graph allows developers to modify model behaviour during training, not just before or after which shortens experimentation cycles significantly. It is the leading framework in AI research globally and is increasingly deployed in production systems as well.

Scikit-learn

Not every AI problem requires deep learning. For structured, tabular data the kind most businesses already generate and store Scikit-learn provides reliable, well-understood algorithms. Its outputs are also easier to explain to non-technical stakeholders, which matters when decisions need to be audited or justified.

What this means for vendor evaluation: A team that recommends the same framework for every project is not making that decision based on your problem. Ask which framework they would use for your specific use case and why.

Natural Language Processing: How AI Understands Text and Language

Natural language processing (NLP) is the AI discipline that enables systems to read, interpret, classify, and generate human language. It is the foundation of chatbots, document review tools, sentiment analysis, automated reporting systems, and intelligent search.

NLP Tools in Active Use

- spaCy - high-speed text processing for document classification and entity extraction at scale

- BERT - deep contextual language understanding used in contract review, support ticket routing, and Q&A systems

- GPT-based models - text generation for automated report drafting, email responses, and intelligent search

- Hugging Face Transformers - the standard library for accessing and fine-tuning pre-trained models across most production NLP applications

- NLTK - used primarily for prototyping and proof-of-concept NLP development

Why Fine-Tuning Changes What NLP Can Do

Pre-trained language models learn from broad internet text. They understand general language well but perform poorly on specialized domains without additional training. Fine-tuning is the process of taking a pre-trained model and continuing its training on domain-specific data.

A model fine-tuned on medical literature understands clinical abbreviations, symptom terminology, and diagnostic context that a general model would misread. The same principle applies to legal text, Tamil-language content, financial documentation, and internal company records.

Fine-tuning typically takes days rather than months and produces substantially better accuracy than using a generic model for domain-specific tasks. It is one of the highest-leverage investments in any NLP project.

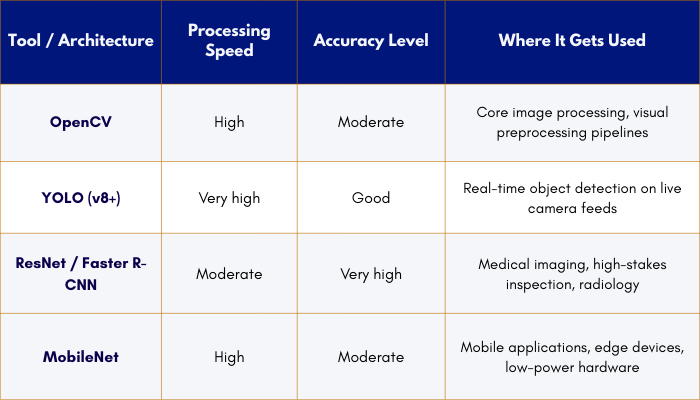

Computer Vision: How AI Processes Images and Video

Computer vision enables machines to extract meaning from visual inputs images, camera feeds, or recorded video. It is actively used in manufacturing inspection, medical imaging, retail analytics, security monitoring, and mobile applications.

Computer Vision Tool Reference

Industry Applications in Chennai's Context

- Manufacturing: YOLO-based defect detection systems inspect products on live production lines at 30 or more frames per second, flagging issues without interrupting line speed

- Healthcare: ResNet and Faster R-CNN handle radiology image analysis where missing a finding carries serious consequences accuracy takes priority over processing speed

- Retail: OpenCV pipelines monitor shelf inventory in real time, identifying gaps, misplacements, and planogram violations automatically

- Mobile: MobileNet runs inference directly on a smartphone, enabling visual features without requiring a cloud connection or fast internet

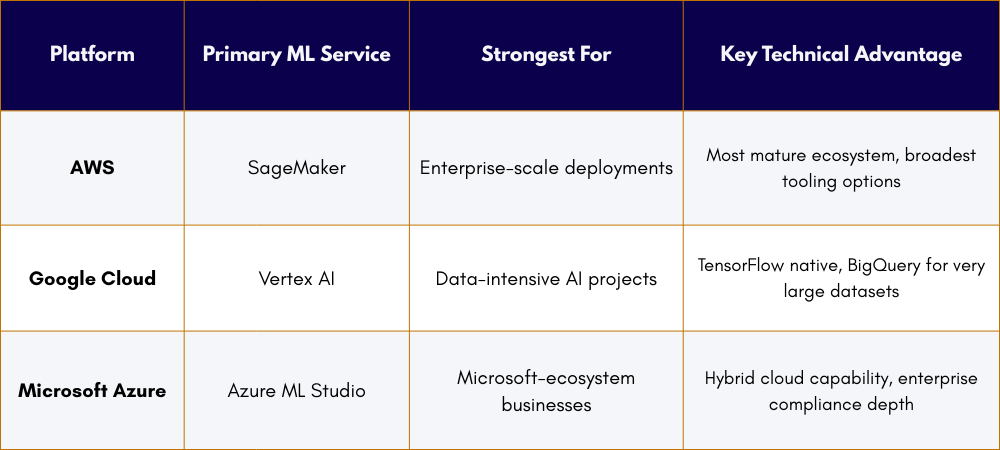

Cloud Infrastructure: Where AI Models Live and Scale

Building an AI model is one part of the work. Deploying it so it runs reliably at scale, handles traffic spikes, and stays available is a different engineering challenge entirely. Cloud platform choice affects cost, latency, compliance options, and how well the AI system integrates with the rest of a business's existing technology stack.

Cloud Platform Comparison for AI Projects

How to Decide Which Platform Fits

Three factors drive the right choice:

- Existing infrastructure - businesses already running Microsoft 365 or Azure services typically integrate AI more cleanly on Azure without additional migration overhead.

- Data volume and type - Google Cloud's BigQuery processes very large structured datasets faster than alternatives and pairs naturally with data analytics workflows.

- Compliance requirements - all three platforms carry enterprise-grade certifications, but specific regional certifications (data residency, sector-specific standards) vary and need direct verification.

A vendor recommending one platform without asking about these factors is not making a considered recommendation.

MLOps: The Difference Between a Model That Works at Launch and One That Works at Month 12

MLOps : Machine Learning Operations is the set of practices, tools, and processes that keep AI models performing reliably after they go live. It is the most commonly overlooked factor in vendor evaluation and the most common reason AI projects underperform six to twelve months after deployment.

Why Models Degrade Without MLOps

An AI model is trained on historical data. As real-world patterns shift customer behaviour changes, product mixes evolve, seasonal patterns vary, the gap between what the model learned and what it now encounters grows. This is called model drift. Without detection systems, accuracy declines silently until the business impact becomes impossible to ignore.

Core MLOps Tools

- Docker packages models into portable containers that run consistently across environments.

- Kubernetes orchestrates containers and handles automatic scaling under variable load.

- MLflow tracks experiments, manages model versions, and enables clean rollback if a deployment fails.

- Prometheus and Grafana monitor live model performance metrics and trigger alerts when accuracy drops below defined thresholds.

The Question to Ask Every Vendor

"How do you detect model drift, and what triggers a retraining cycle?"

A team with a specific, process-level answer has operated AI systems in production over time. A team that responds vaguely or defers the question has likely only delivered models to staging environments, not sustained them in real use.

Industry-Specific AI Applications Across Chennai's Economy

Different industries require different tools, different data handling standards, and different compliance frameworks. Generic AI capability is not the same as domain-specific AI experience.

1. Healthcare

Tools: PyRadiomics, DICOM processing libraries, MONAI

- Medical image analysis and diagnostic decision support

- Patient risk stratification and clinical scoring models

- Clinical document processing and report automation

HIPAA-adjacent data handling practices apply even for domestic Indian deployments.

2. Financial Services

Tools: Prophet, Scikit-learn, custom anomaly detection pipelines

- Fraud detection on live transaction data

- Credit risk scoring and automated KYC document review

- Time series forecasting for portfolio and risk management

RBI data localisation requirements apply verify on-premises deployment availability before engaging a vendor.

3. Manufacturing

Tools: YOLO, ONNX, TensorRT

- Real-time defect detection on production lines at 30+ frames per second

- Predictive maintenance on industrial equipment

- Energy consumption optimisation across factory operations

Edge deployment on factory hardware removes cloud latency and eliminates connectivity dependency entirely.

4. Retail & E-commerce

Tools: TensorFlow Recommenders, collaborative filtering, demand forecasting models

- Personalised product recommendation engines

- Inventory optimisation and automated restocking alerts

- Customer segmentation for targeted marketing campaigns

Standard data privacy practices apply - masking customer data before processing is recommended as a baseline.

5. Logistics

Tools: Route optimisation algorithms, time series demand models, fleet analytics

- Delivery route planning and dynamic real-time re-routing

- Warehouse demand prediction and stock positioning

- Fleet maintenance scheduling based on usage patterns

GPS and location data handling should align with India's DPDPA guidelines.

AutoML vs. Custom Development: Choosing the Right Approach

AutoML platforms automate portions of the machine learning pipeline feature selection, model selection, hyperparameter tuning that traditionally require significant data scientist time. Platforms like Google AutoML, H2O.ai, and DataRobot can deliver working models in days rather than months for well-defined problems.

AutoML is not an inferior approach. It is the right tool for a specific category of problem. A vendor who recommends full custom development for every project regardless of scope is not giving objective advice and neither is one who defaults to AutoML to keep costs low on problems that genuinely need custom work.

How to Evaluate an AI Development Company in Chennai

The Six Criteria That Actually Separate Strong Vendors from Average Ones

1. Team Composition: Production AI requires data scientists, ML engineers, software engineers for system integration, and QA staff working together. A team composed only of data scientists can build models but cannot deliver a reliable production system. Ask specifically who is on the team, what each person's role is, and who handles post-deployment monitoring.

2. Framework Breadth in Production: Ask which frameworks they have deployed to production, not experimented with in internal projects. Production experience across TensorFlow, PyTorch, and major cloud ML platforms indicates a team that has navigated real deployment challenges across different problem types.

3. MLOps Maturity: Ask how they detect model drift and what triggers a retraining cycle. Specific, process-level answers indicate operational experience. Vague answers indicate the team has not sustained AI systems past the initial launch phase.

4. Industry Experience: Request case studies from your specific sector. Domain knowledge shortens timelines and reduces errors that come from models that are technically sound but contextually wrong for the industry.

5. Post-Deployment Support Scope: Clarify exactly what ongoing support includes: monitoring frequency, retraining triggers, SLA response times, and whether support is time-limited or ongoing. Support terms vary significantly between vendors and are rarely detailed upfront.

6. Data Security and Compliance: Verify ISO 27001 certification, NDA terms that cover data ownership, data handling procedures, and whether on-premises or private cloud deployment is available for sensitive data.

Conclusion

Most businesses approach AI vendor selection the same way they approach any software purchase comparing pricing, reviewing portfolios, and checking references. That approach misses the factors that actually determine whether an AI project succeeds over time.

By this point you know what frameworks signal genuine production experience. You know what MLOps maturity looks like and why its absence is a serious operational risk. You know the difference between a team that has deployed AI and a team that has only built models. You know what questions to ask and what specific answers sound like versus rehearsed ones.

If you are evaluating AI development company in Chennai and want a direct, no-pitch conversation about your specific problem reach out to trimsel team. We will assess your problem, review your data situation, and give you an honest view of what is viable, what timeline is realistic, and whether we are the right fit, nothing more